Neural 3D Reconstruction and Immersive VR Visualization of Row Crops Across Phenological Growth Stages

Abstract

Plant phenotyping in precision agriculture increasingly requires high-fidelity three-dimensional reconstruction and accessible visualization methods. This study presents an integrated pipeline combining Neural Radiance Fields (NeRF), 3D Gaussian Splatting (G-Splat), and Virtual Reality (VR) visualization for comprehensive plant analysis across developmental stages. We collected multi-view imagery of finger millet, proso millet, mungbean, and field pea under controlled greenhouse conditions, aligning data acquisition with standardized BBCH phenological scales. Camera pose estimation was performed using GLOMAP, followed by reconstruction via both Nerfacto and G-Splat implementations. Quantitative evaluation using PSNR, SSIM, and LPIPS metrics revealed complementary strengths of the two approaches: G-Splat achieved superior structural fidelity, while NeRF provided enhanced perceptual realism. Both reconstruction methods were successfully integrated into an immersive VR greenhouse environment deployed on Meta Quest headsets, maintaining consistently high framerates. This framework establishes a practical foundation for incorporating neural reconstruction and immersive technologies into agricultural phenotyping workflows, supporting both research applications and educational engagement.

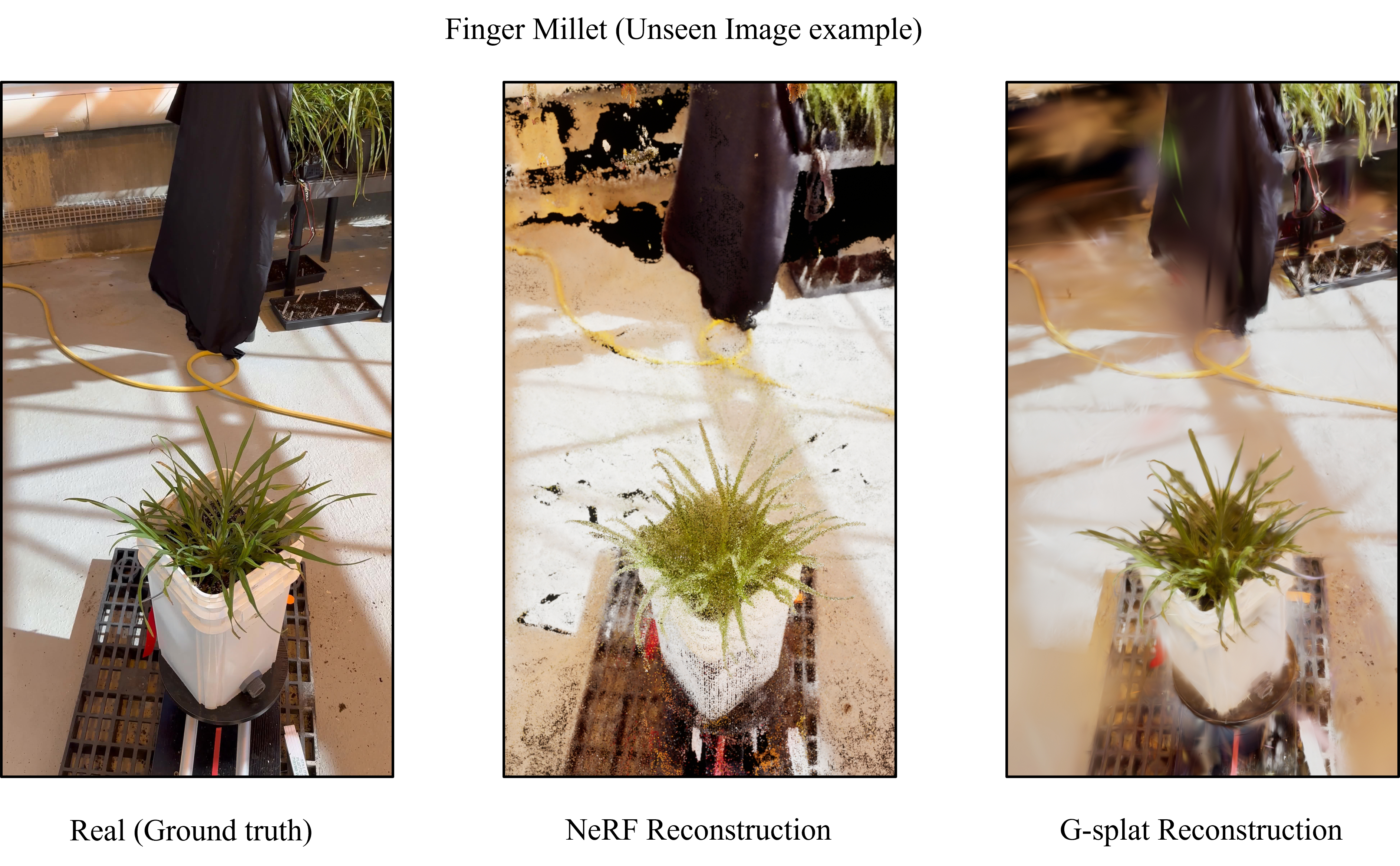

3D Reconstruction Across Growth Stages

NeRF-based 3D point cloud reconstructions capturing the full growth cycle of various plants, from early development to maturity. Each model represents a distinct growth stage, providing a consistent and detailed 3D view of plant structural and morphological evolution over time.

ass="team-img">

ass="team-img">